Abstract

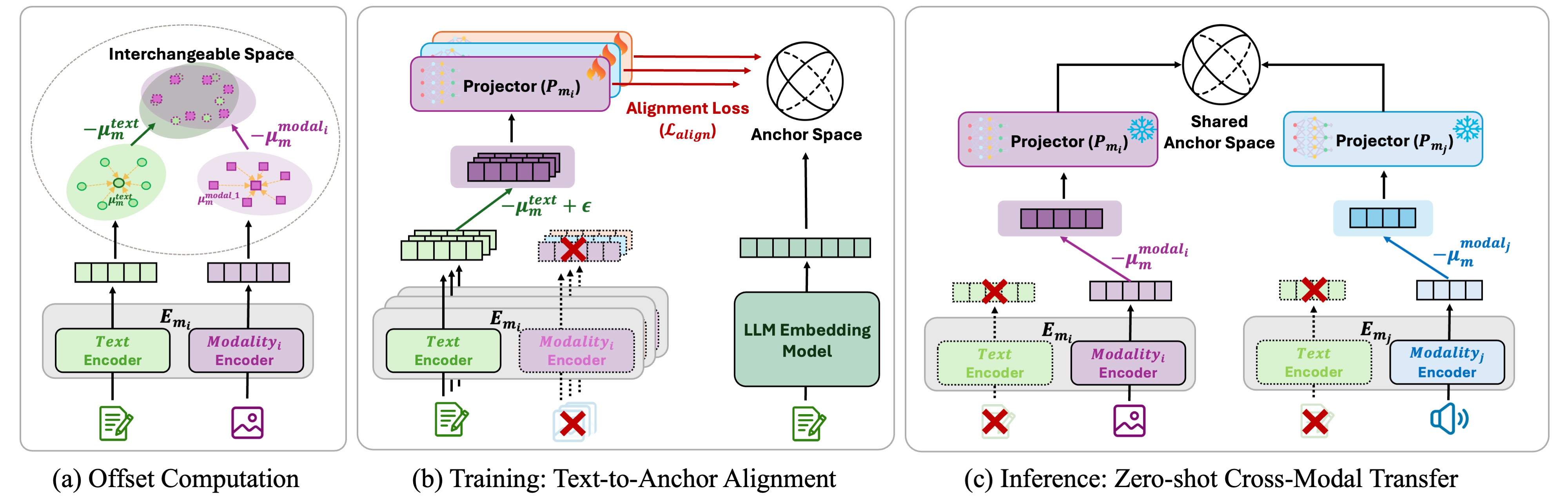

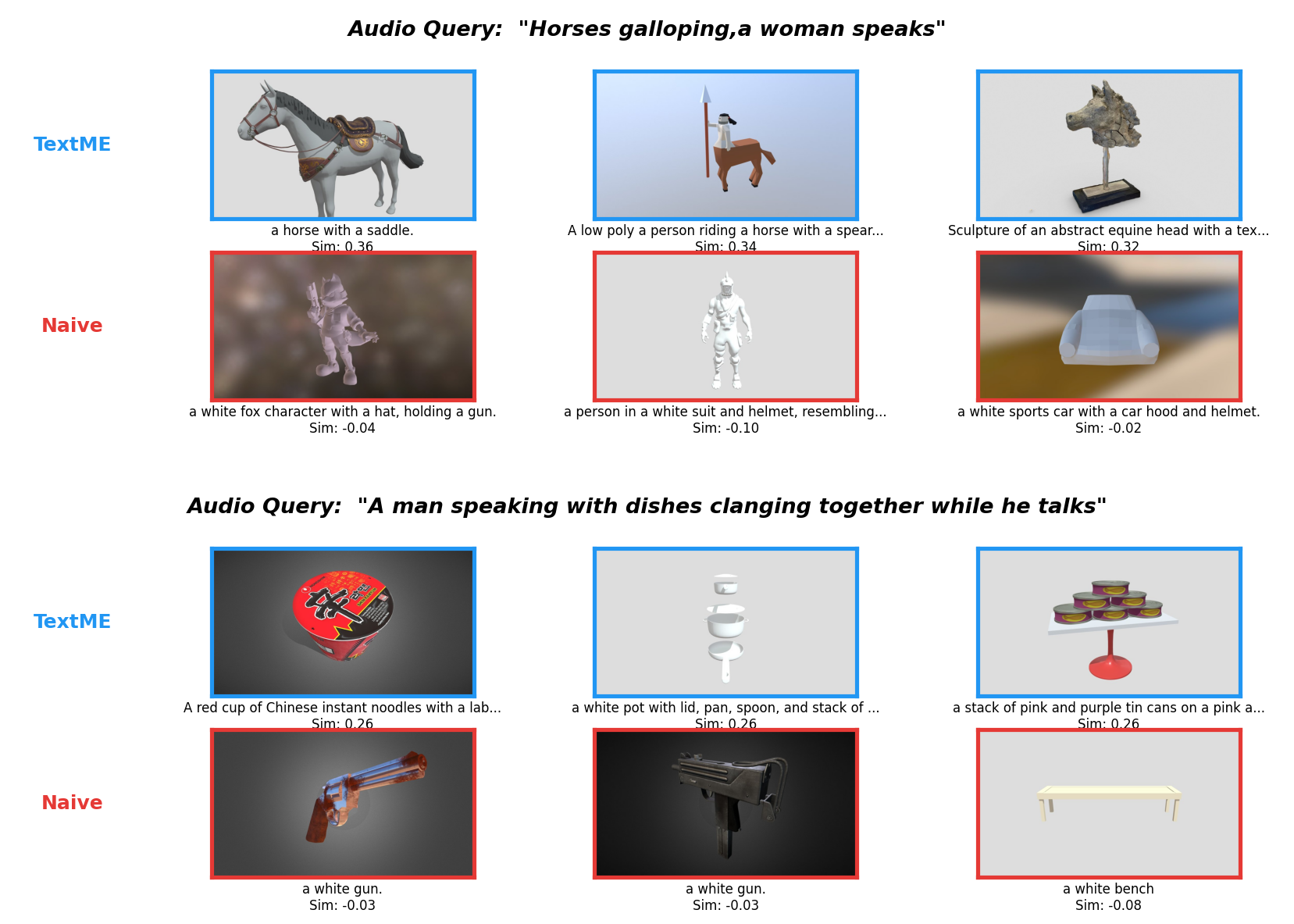

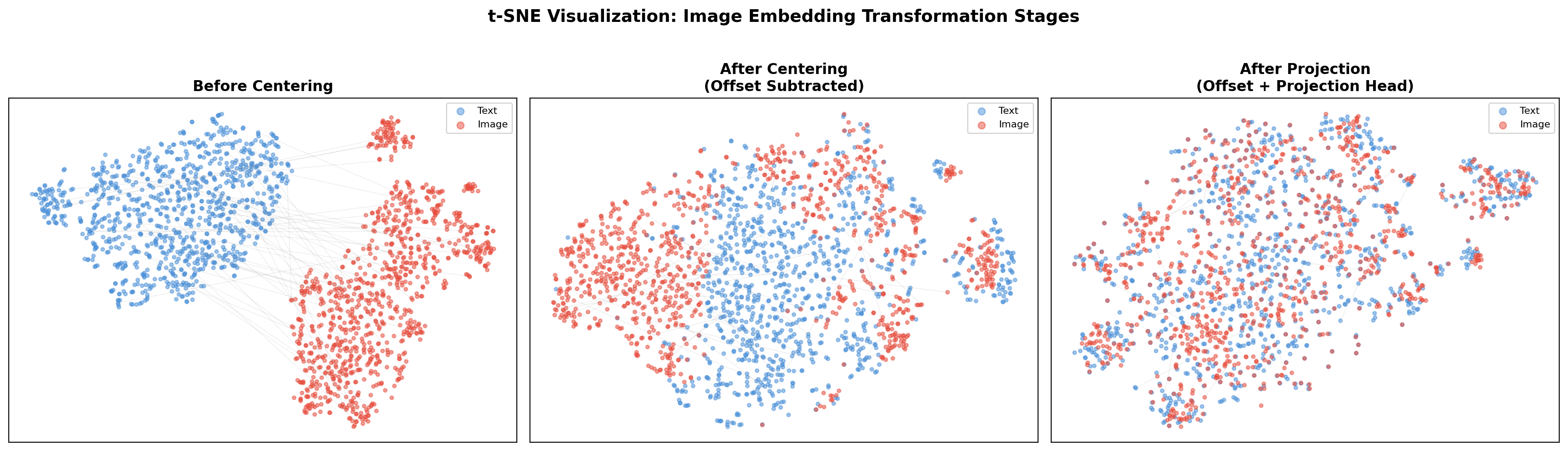

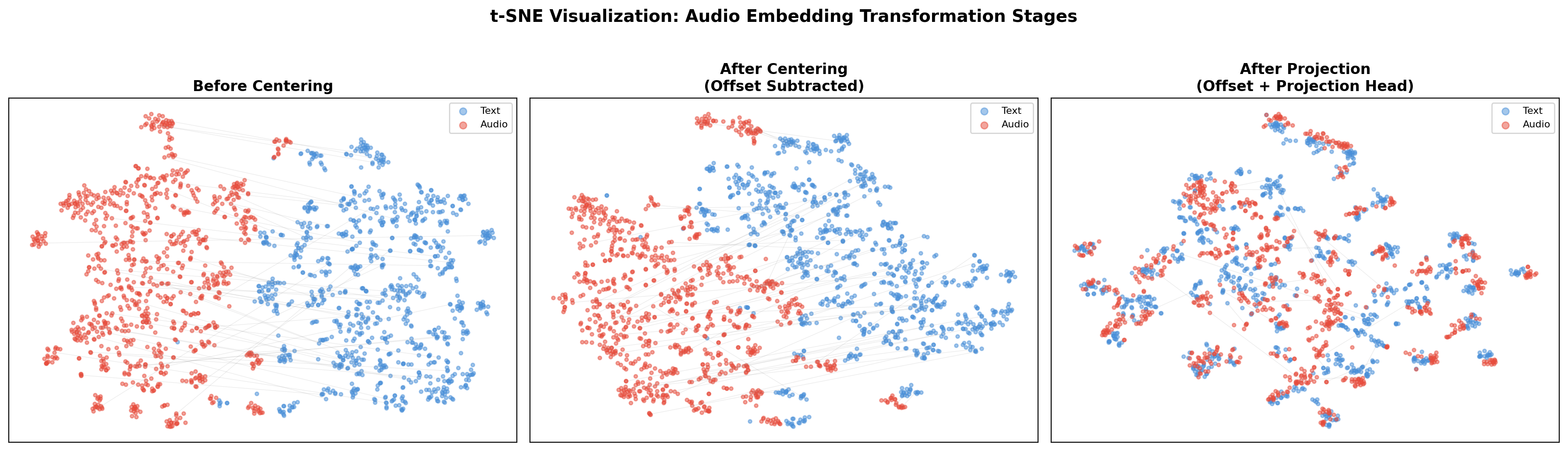

Expanding multimodal representations to novel modalities is constrained by reliance on large-scale paired datasets (e.g., text–image, text–audio, text–3D, text–molecule), which are costly and often infeasible in domains requiring expert annotation such as medical imaging and molecular analysis. We introduce TextME, the first text-only modality expansion framework, to the best of our knowledge, projecting diverse modalities into LLM embedding space as a unified anchor. Our approach exploits the geometric structure of pretrained contrastive encoders to enable zero-shot cross-modal transfer using only text descriptions, without paired supervision. We empirically validate that such consistent modality gaps exist across image, video, audio, 3D, X-ray, and molecular domains, demonstrating that text-only training can preserve substantial performance of pretrained encoders. We further show that our framework enables emergent cross-modal retrieval between modality pairs not explicitly aligned during training (e.g., audio-to-image, 3D-to-image). These results establish text-only training as a practical alternative to paired supervision for modality expansion.